Introduction

For years, high-performance React code often meant writing for the runtime and for the reader at the same time. You built the feature, then you layered on useMemo, useCallback, and React.memo to stop parts of the tree from re-rendering too often. That pattern worked, but it came with a cost: more code, more mental overhead, and more ways to get performance optimization subtly wrong.

The React 19 Compiler changes that tradeoff.

Instead of asking developers to hand-place memoization hints across a codebase, React now offers a build-time compiler that analyzes components and hooks, understands the Rules of React, and injects optimization logic automatically. That does not mean performance work disappears. It means the default unit of optimization shifts from handwritten hook choreography to compiler-guided analysis.

That distinction matters. The compiler is not a magic cache for every expensive function in your application, and it does not eliminate the need to profile real bottlenecks. What it does is remove a large category of routine, fragile memoization work that previously cluttered component code and made reviews harder than they needed to be.

Core Content

Why manual memoization became a frontend tax

The old approach to React performance was workable but brittle. Developers would wrap components in React.memo, wrap derived values in useMemo, and wrap handlers in useCallback, all to preserve referential stability and reduce unnecessary work during updates.

The problem was not just verbosity. The real issue was reliability.

A component could look optimized and still fail in practice. React's compiler introduction shows a common case: even if a handler is wrapped in useCallback, creating a fresh inline arrow function for each rendered item can still break memoization at the child boundary. This is exactly the sort of bug teams shipped because the code looked "performance-aware" while still doing extra work.

That produced a bad engineering loop:

- Developers added memoization defensively, not always based on profiling.

- Code reviews became filled with style-level debates about

useCallback. - Seemingly harmless refactors could break referential equality.

- Cleanup was risky because removing memoization could change behavior or effect timing.

The compiler addresses this by moving the decision closer to where it belongs: the build step, where code can be analyzed systematically instead of heuristically by humans scanning pull requests.

What the React 19 Compiler actually does

At a high level, React describes the compiler as a build-time tool that automatically optimizes React applications. Its main purpose is to handle memoization for you so you do not need to manually scatter useMemo, useCallback, and React.memo across normal component code.

That headline is accurate, but the implementation detail is what makes the feature interesting.

According to the React team's design goals, the compiler is intentionally built to preserve React's existing declarative programming model rather than replace it. The stated goal is to remove concepts from day-to-day React authoring, not add more. In practical terms, that means your component code stays recognizable. You still write React. The compiler decides where it can safely reuse work.

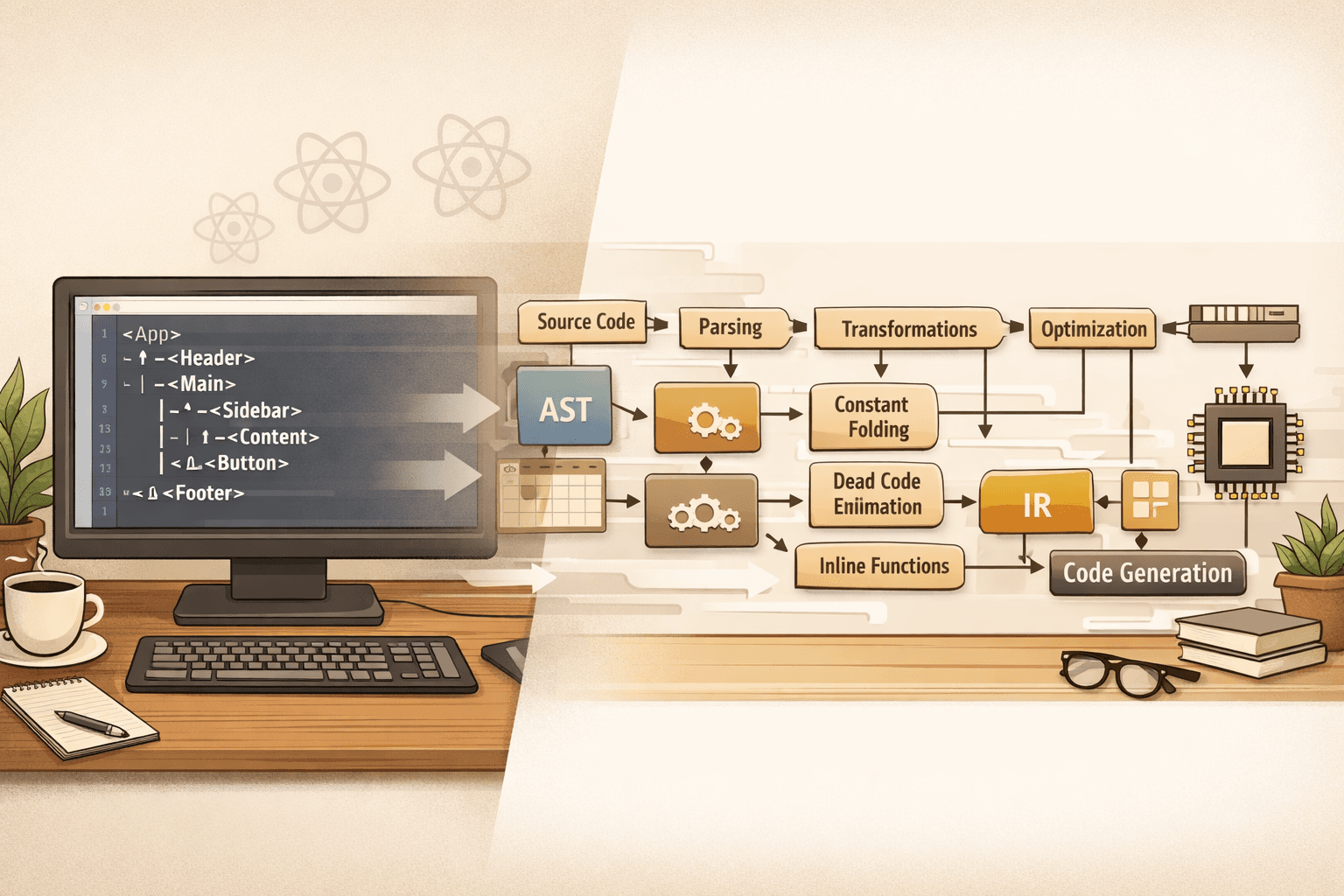

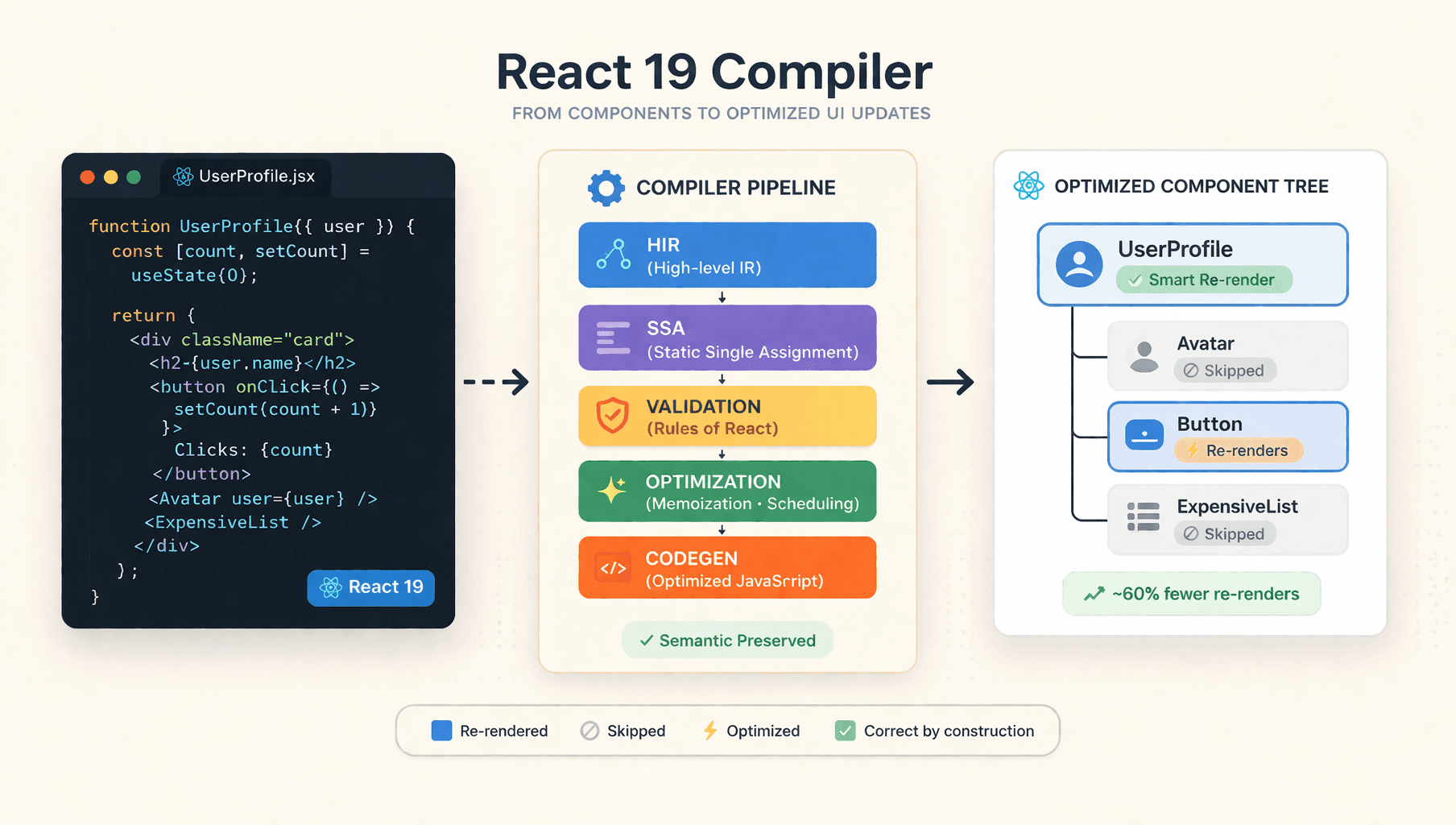

Under the hood, the pipeline is more like a traditional compiler than a runtime patch:

High-level intermediate representation

The compiler first lowers Babel AST input into its own high-level intermediate representation, or HIR. React's design docs say this representation preserves JavaScript order-of-evaluation semantics while also keeping high-level constructs intact. It retains distinctions like logical expressions, ternaries, and loop forms instead of flattening everything into a less readable internal model.

That matters for two reasons:

- The compiler can optimize without fundamentally distorting the original meaning of the code.

- The output stays closer to what developers wrote, which is better for debugging and tooling.

SSA conversion and validation

From there, the compiler converts identifiers into SSA, or Static Single Assignment, form. That step makes data flow easier to reason about because each value can be tracked more precisely through the function.

The compiler also validates whether the input follows React's expectations. React's own docs and design notes make this explicit: the compiler depends on code following the Rules of React, including pure render logic and valid hook usage. Conditional hook calls or unsafe patterns are not just style issues anymore. They become adoption blockers.

This is one of the quiet but important shifts introduced by the compiler. Performance is no longer separate from correctness. Code that is architecturally sloppy is harder to optimize automatically.

Optimization passes and reactive scopes

After validation, the compiler runs optimization passes such as dead code elimination and constant propagation. It then identifies reactive scopes: parts of the program whose values change together and therefore need to be re-evaluated together.

This is the key to the compiler's practical value. Rather than re-running broad sections of the tree because a parent updated, the compiler can often preserve stable output and skip work more precisely. React refers to this behavior as a form of fine-grained reactivity.

In plain terms, the compiler gets closer to answering the question humans used to answer manually: "Which parts of this render actually depend on the state that changed?"

Code generation back into React code

Once the compiler has transformed and optimized the function, it generates code back into AST form for the build pipeline. The application still ships normal JavaScript, but that JavaScript now contains memoization behavior the compiler inferred automatically.

That is the architectural win. You author the simple version. The build produces the optimized version.

What it optimizes well

The official React introduction highlights two main optimization targets.

First, it helps skip cascading re-renders. In many React apps, a parent update causes children to re-render even when those children do not meaningfully depend on the changed state. The compiler can often reuse prior JSX output and avoid re-rendering unaffected descendants.

Second, it memoizes expensive calculations performed during rendering inside components or hooks. If you perform costly derivation work in a component body, the compiler may preserve the result when inputs have not changed.

For many codebases, that covers a large share of the memoization that used to be written by hand.

What it does not eliminate

This is where teams need to stay disciplined.

The compiler does not memoize every function in your codebase. React explicitly notes that its memoization applies to React components and hooks, not arbitrary helper functions everywhere. It also does not create a shared cache across multiple components. If the same expensive non-React computation is called in several places, you may still need a different caching strategy.

Likewise, old performance fundamentals still matter. React's legacy performance guidance remains relevant on issues the compiler does not replace, such as virtualizing long lists. If you render thousands of rows, compiler-driven memoization is not a substitute for windowing.

So the right conclusion is not "manual optimization is dead." The right conclusion is "routine component-level memoization is no longer the first tool you should reach for."

How to adopt it without creating regressions

The safest way to think about React Compiler adoption is as a migration program, not a toggle.

React's incremental adoption guide recommends starting small for a reason. Compiler rollout is easier when you measure behavior, identify incompatible code, and expand coverage deliberately.

A practical rollout usually looks like this:

Start with a narrow surface area

Use Babel overrides to compile a specific directory or feature slice first. This gives you a contained set of components to validate, benchmark, and debug.

This is the correct approach for larger products because it avoids mixing architecture cleanup, performance testing, and full-app rollout into one risky change.

Use annotation mode when you want strict control

React supports an annotation mode where only functions marked with "use memo" are compiled. That is useful when you want to opt stable components in individually instead of enabling the compiler for an entire area of the app.

This approach is slower operationally, but it gives teams very strong control over scope.

Opt out when necessary

React also supports "use no memo" as an escape hatch. Use it when a component is temporarily incompatible, difficult to debug, or blocked by a third-party integration. The key word is temporarily. If a component needs opt-out forever, that usually deserves an explicit architectural note.

Use runtime gating for production experiments

The compiler configuration supports gating with feature flags. That opens the door to A/B rollout, segmented exposure, and safer production validation. If you operate at scale, this is the responsible way to prove the compiler's value in your environment instead of assuming benchmark results from someone else's stack apply to yours.

Tune failure behavior and observability

React's configuration reference documents options like panicThreshold and logger. Those are not minor details. In real delivery pipelines, they help answer two operational questions:

- Should a problematic component fail the build or be skipped?

- How will the team observe what the compiler is compiling successfully?

That is the difference between experimentation and maintainable adoption.

What changes in day-to-day React engineering

The compiler does not just change build output. It changes team habits.

Code reviews should become less obsessed with reflexive useCallback usage and more focused on component purity, effect correctness, and actual measured bottlenecks. A simpler component is now more likely to be the right component, provided it follows React's rules cleanly.

That leads to a better review standard:

- Prefer straightforward component code first.

- Add manual memoization only when there is a concrete reason.

- Do not remove existing memoization from legacy code casually.

- Test behavior before cleanup, especially around effect dependencies.

- Profile before claiming a performance win.

React itself warns that for existing code, leaving manual memoization in place is often safer unless you have verified that removing it does not change compilation output or runtime behavior.

That point is worth emphasizing. The compiler reduces the need to add memoization to new code. It does not justify a mass deletion campaign in old codebases.

When manual memoization still makes sense

Even in a compiler-first React stack, there are still valid cases for manual control.

Use useMemo or useCallback when:

- A memoized value is intentionally used as an effect dependency.

- You need exact stability guarantees for integration boundaries.

- You are managing behavior that should not change even if the compiler's heuristics would choose differently.

- You are dealing with performance outside React's component-and-hook optimization boundary.

That is a healthier future for these APIs. They become targeted escape hatches, not ritual boilerplate.

Summary

The React 19 Compiler is important not because it makes React "faster by magic," but because it relocates common optimization work from handwritten component code to a build-time analysis pipeline.

That shift has three practical consequences:

- New React code can usually be written more directly.

- Performance work becomes more architectural and less ceremonial.

- Adoption success depends on code quality, rollout discipline, and profiling.

The biggest mistake teams can make is treating the compiler as permission to stop thinking about performance. The better interpretation is narrower and more useful: React now automates a large class of memoization decisions that developers were never especially good at making consistently by hand.

FAQ

Does React Compiler make useMemo and useCallback obsolete?

No. React recommends relying on the compiler for most new memoization needs, but useMemo and useCallback still remain useful as escape hatches when you need precise control, especially around effect dependencies or specialized integration points.

Should I remove all existing memoization from a legacy codebase?

No. React explicitly advises caution here. Existing memoization can affect compilation output and runtime behavior, so blanket removal is a bad migration strategy. Leave it in place unless tests and profiling show it is safe to simplify.

Can the compiler optimize every expensive function in my app?

No. The compiler focuses on React components and hooks. It does not act as a universal cache for arbitrary helper functions, and its memoization is not shared automatically across different components.

Does the compiler replace other performance techniques like list virtualization?

No. React's older performance guidance still applies for problems like rendering very large lists. If the bottleneck is too many DOM nodes or too much rendered output, virtualization is still the correct tool.

Is full adoption required on day one?

No. React documents an incremental path using directory-based adoption, "use memo" opt-in, "use no memo" opt-out, and feature-flag gating. That is the right way to roll it out in production applications.

Conclusion

The React 19 Compiler marks the end of manual memoization as a default coding style, not the end of performance engineering.

That is a meaningful distinction. Frontend teams should spend less time writing defensive hook wrappers and more time building clean components, profiling real bottlenecks, and fixing architectural issues the compiler cannot paper over. If your code follows the Rules of React, the compiler gives you a stronger default. If it does not, the compiler will surface that reality quickly.

The long-term value here is not just fewer re-renders. It is less accidental complexity in the codebase.

That is a better bargain than another layer of performance folklore.